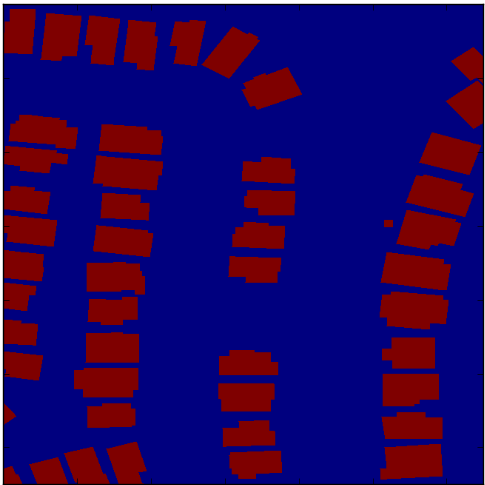

Furthermore, SAR sensors can transmit in up to 4 polarizations by transmitting in a horizontal or vertical direction and measuring only the horizontally- or vertically-polarized (HH, HV, VH, VV) part of the echo. Depending on the target properties and the imaging geometry, the radar antenna will receive all, some, or none of the radio wave’s energy. Reflection strength provides insights on the material properties or physical shape of an object. For example, the intensity of the pixels in a radar image are not indicative of an object’s visible color, but rather represent how much radar energy is reflected to the sensor. Additionally, radar waves pierce clouds, enabling visualization of Earth’s surface in all weather conditions. Consequently, SAR sensors succeed where optical sensors fail: They do not require external illumination and can thus collect at night. The sensor transmits a wave, it bounces off of the surface, and then returns back to the sensor (known as backscatter). SAR sensors collect data by actively illuminating the ground with radio waves rather than utilizing the reflected light from the sun as with passive optical sensors. cars and buildings) across broad scales, varying geographies, and often with limited resolution - challenges rarely presented by natural scene data. Analyzing overhead data typically entails detection or segmentation of small, high-density, visually heterogeneous objects (e.g. Although these datasets are immensely valuable, the models derived from them do not transition well to the unique context of overhead observation. Other modalities such as radar remain generally unexplored with very few ground based radar datasets, most of which are focused on autonomous driving such as EuRAD and NuScenes. Additionally, multi-modal datasets continue to be developed, with a major focus on 3D challenges, such as PASCA元D+, Berkeley MHAD, Falling Things, or ObjectNet3D. For example, significant research has been galvanized by datasets such as ImageNet, MSCOCO and PASCALVOC, among others. The advancement of object detection and segmentation techniques in natural scene images has been driven largely by permissively licensed open datasets. Score of 0.21) outperform those trained on SAR data alone (F1 score of 0.135). Segmentation models pre-trained on optical data, and then trained on SAR (F1 We present a baseline and benchmarkįor building footprint extraction with SAR data and find that state-of-the-art Unique building footprints labels, enabling the creation and evaluation of Km^2 over multiple overlapping collects and is annotated with over 48,000 The dataset and challenge focus on mapping and buildingįootprint extraction using a combination of these data sources. (MSAW) dataset and challenge, which features two collection modalities (both To address this problem, we present an open Multi-Sensor All Weather Mapping Particularly at very-high spatial resolutions, i.e. To researchers to explore the effectiveness of SAR for such applications,

Despite all of these advantages, there is little open data available Response, when weather and cloud cover can obstruct traditional optical To penetrate clouds and collect during all weather, day and night conditions.Ĭonsequently, SAR data are particularly valuable in the quest to aid disaster

optical data is often the preferred choice for geospatialĪpplications, but requires clear skies and little cloud cover to work well.Ĭonversely, Synthetic Aperture Radar (SAR) sensors have the unique capability Yet, most of theĬurrent literature and open datasets only deal with electro-optical (optical)ĭata for different detection and segmentation tasks at high spatial Within the remote sensing domain, a diverse set of acquisition modalitiesĮxist, each with their own unique strengths and weaknesses.